An AI-powered healthcare patient triage app is shipped by a product team in the duration of four months. On usability tests shows that the app performs well and scores high. Patients seem to be engaging with the app. However, a compliance audit reveals that there are 14 gaps in ways system handles protected health information, and the legal team stops the rollout.

This pattern repeats across healthcare app development projects at enterprise scale. IBM Security’s 2025 Cost of a Data Breach Report showed that healthcare data breaches cost an average of $7.42 million per incident. This is the highest number among industries for the fifteen consecutive years. The OCR collected $12.8 million in HIPAA penalties and settlements in 2024 alone, with the majority targeting Security Rule failures tied to risk analysis gaps.

AI compounds the compliance challenge. Advanced machine learning models analyze all patient data to generate accurate predictions and stores results across a distributed infrastructure. Every step gives rise to new surfaces for attack and regulatory obligations that legacy app architectures never addressed or confronted.

Where AI Creates New Compliance Gaps

The major tension sits between the ways AI models process data and what HIPAA demands. The Privacy Rule demands that covered entities limit use and restrict disclosing protected health information to the minimum amount necessary. As it's well known that AI models require huge amounts of data to give accurate and precise results.

A clinical decision support tool that is meant to check the vitals of patients, their medication history, lab reports, and demographic data to predict readmission risk touches PHI at every year. Things that fall under HIPAA’s security requirements are training data, inference outputs, model weights, and audit logs. Many engineering teams appoint AI developers who have a better understanding of model architecture but lack deep familiarity with the Security Rule’s administrative, physical, and technical safeguard requirements.

The problem intensifies with third-party AI services. Organizations that route PHI through external APIs for natural language processing or image recognition must execute Business Associate Agreements with each vendor. They must also verify that the vendor's infrastructure meets the same encryption, access control, and audit logging standards that HIPAA imposes on the covered entity itself.

Vibe coding tools and AI app builders make this worse. They build prototypes that are functional and look production-ready but ship without encrypted data transit, audit trail infrastructure, and role-based access controls that OCR investigators examine first during breach investigations.

The Risk Analysis Gap That Triggers Most Penalties

Enforcement data Of OCR narrates a clear story. The majority of HIPAA penalties in 2025 targeted Security Rule failures, with risk analysis deficiencies as the most common finding. For 2026, HIPAA violation fines start at $13,785 per violation and scale up to $2 million per year for willful neglect that organizations fail to correct.

A risk analysis for an AI-powered healthcare app must cover territory that traditional assessments never touch.

- Model training pipelines that ingest PHI from EHR systems, wearable devices, and patient-reported data must keep written records of how that data flows, where it persists, and who can access it at each stage.

- Inference endpoints that generate clinical recommendations must log every input-output pair with timestamps, user identifiers, and access credentials to satisfy the Security Rule's audit control requirements under 45 CFR 164.312(b).

- Engineering leaders who skip this analysis during the build phase discover the gaps during an OCR investigation, when remediation costs compound with penalty exposure and reputational damage.

Read Also: Top Healthcare Data Analytics Companies Enhancing CRM for Medical Providers

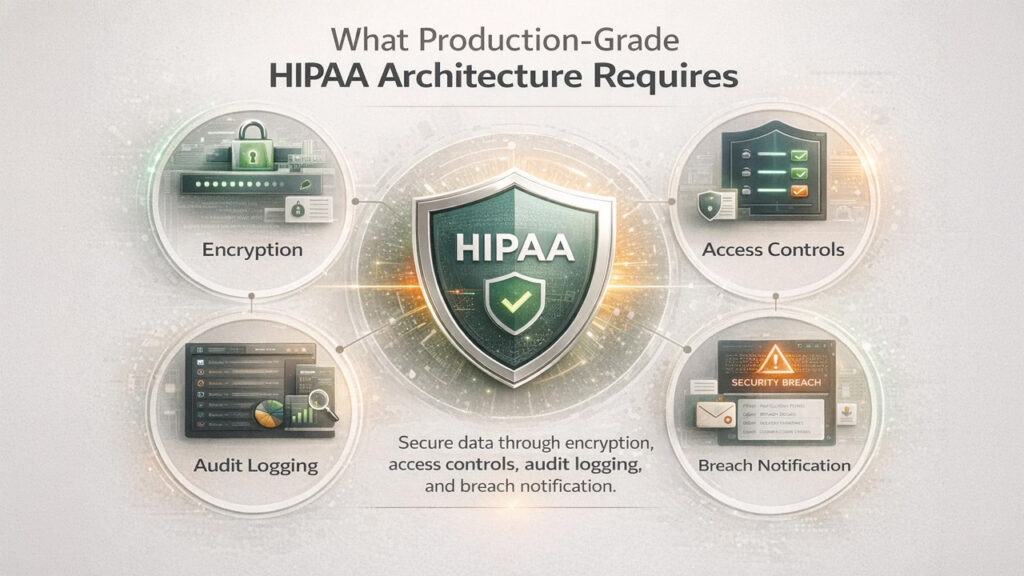

What Production-Grade HIPAA Architecture Requires

There are four architectural decisions that teams must make before they begin to write production code that reveals the difference between a compliant healthcare app and a vulnerable one. Data encryption must operate at rest and in transit using AES-256 and TLS 1.3, with key management handled through a dedicated service that maintains rotation schedules and access policies separate from the application layer.

Access controls must enforce role-based permissions to limit PHI visibility to the minimum necessary standard. A nurse, a billing coordinator, and a data scientist training a readmission model each require different access scopes. The system must enforce those boundaries at the API level, not through application-layer workarounds.

Audit logging must capture every change, access event, and disclosure of PHI with immutable records that persist for the six-year retention period HIPAA requires. This becomes even more critical in Healthcare Data Analytics environments, where tracking data lineage, model behavior, and analytical outputs is essential for both compliance and clinical accountability. Logs that are AI-specific must also track changes in model version, training data lineage, and inference confidence scores.

Infrastructure of breach notification must identify and detect unauthorized access within the 60-day notification window and automatically trigger workflows that satisfy requirements of both federal HIPAA and state-level breach notification laws, which vary across all 50 states.

5 Trusted AI-Driven Healthcare App Development Partners in the USA (2026–27)

Those enterprise teams that are looking to move from prototype to HIPAA-compliant production often seek partners who have proven and verified healthcare engineering experience. The following firms operate in the United States and carry verified reviews on the Clutch platform, ordered by rating and review volume.

1. GeekyAnts

GeekyAnts is a global technology consulting firm specializing in digital transformation, end-to-end app development, digital product design, and custom software solutions. This firm has successfully delivered more than 800 projects that carry in-depth HIPAA-compliant healthcare applications, including platforms for telemedicine, apps for mental health, EHR integrations, and AI-powered clinical tools that are built on React Native, Flutter, Next.js, and Node.js.

Clutch Rating: 4.9/5 (112+ Verified Reviews) Address: 315 Montgomery Street, 9th & 10th Floors, San Francisco, CA 94104, USA

Phone: +1 845 534 6825 Email: info@geekyants.com Website: www.geekyants.com/en-us

2. Agency Partner Interactive

Agency Partner Interactive is a digital transformation firm headquartered in Plano, Texas. Founded in 2012, the company builds custom healthcare platforms, patient portals, and HIPAA-compliant web applications. Their team serves clients across healthcare, legal, and B2B services, with a structured approach to regulatory compliance and secure data architecture.

Clutch Rating: 4.9/5 (66 Verified Reviews) Address: 5830 Granite Pkwy, Suite 100-235, Plano, TX 75024, USA Phone: (214) 295-5845

3. LITSLINK

LITSLINK is a Palo Alto-headquartered software development firm that delivers full-cycle mobile, web, and AI-powered applications to 300+ global clients. The team carries particular strength in health tech platforms, machine learning integration, and HIPAA-compliant data pipelines, with senior engineers who average 7+ years of production experience.

Clutch Rating: 4.8/5 (78 Verified Reviews) Address: 530 Lytton Ave, Floor 2, Palo Alto, CA 94301, USA Phone: (650) 865-1800

4. Blue Label Labs

Blue Label Labs is a New York-based product development agency that won the 2025 Clutch Global AI Award. The firm builds healthcare applications for providers and funded startups, with completed projects for fertility clinics, behavioral healthcare systems, and tribal health organizations. Their team handles HIPAA-compliant telehealth platforms with React Native, Stripe, and Azure integrations.

Clutch Rating: 4.7/5 (68 Verified Reviews) Address: 18 West 18th Street, New York, NY 10011, USA Phone: (206) 819-1460

5. EffectiveSoft

EffectiveSoft is a San Diego-headquartered custom software company founded in 2003, with 340+ engineers across four U.S. offices. Clutch recognizes the firm as a Top Artificial Intelligence Company and Top Software Developer. Their healthcare practice covers NLP-powered clinical chatbots, patient data analytics, and medical software with HIPAA and GDPR compliance built into every layer.

Clutch Rating: 4.8/5 (19 Verified Reviews) Address: 4445 Eastgate Mall, Suite 200, San Diego, CA 92121, USA Phone: 1-800-288-9659

Final Thoughts

The clinical value that AI brings to healthcare app development is real. Features such as smart diagnostic support, faster triage, and personalized treatment recommendations improve outcomes for patients while also reducing operational burden on providers.

However, when organizations treat AI-generated prototypes as infrastructure for production, failure starts to occur. Healthcare apps demand encryption at every data layer, role-based access controls that enforce minimum necessary standards, audit trails that satisfy six-year retention requirements, and breach detection systems that operate within the 60-day federal notification window.

Engineering leaders who survive OCR investigations and earn patient trust are the ones who invest in compliance architecture before they write production code build apps. On contrast, those who skip this step spend next year remediating gaps that a structured 30-minute architecture review with an experienced consulting partner would have identified from the start. Building an AI-powered healthcare app without these safeguards leads to costly remediation, penalties, and long-term reputational damage.

%201.png)

%201.png)

%201.png)