You might seen automation projects look excellent in architecture diagrams and still disappoint the people who had to rely on them every day. The workflows were connected, the business rules were documented, and the dashboards said everything was moving in the right direction. But once the system had to face situations when visual data is unreliable, confidence started to fall. That is usually the point when teams begin looking into synthetic data computer vision, not because they want something flashy, but because they finally realize the system is only as dependable as the data conditions it was trained to handle.

Automation works best when inputs are predictable

Most business automation is built around consistency. A form is submitted in a known format. A record enters the CRM with expected fields. A workflow triggers based on values that can be read cleanly and routed with confidence.

That world is structured by design. It rewards clarity, repetition, and control. When a company automates lead routing, follow-up sequences, invoice approvals, or support workflows, the assumption is that the input layer is stable enough for logic to work.

The moment visual interpretation enters the process, that stability starts to disappear.

An image, scan, camera feed, or scene captured in the wild does not behave like a CRM field. It carries ambiguity. Lighting changes. Angles shift. Objects overlap. Background clutter introduces noise. A human can often adapt without much effort, but an automated system cannot improvise beyond what its training allows.

That is the point many teams underestimate. They think the automation is failing in the workflow layer, when in reality it is failing in perception.

The problem usually starts earlier than people think

When teams discuss automation breakdowns, they often focus on the visible symptoms. A detection is missed. A classification is wrong. A downstream workflow triggers the wrong action. A person has to step in manually.

Those are real problems, but they are late-stage problems. By the time they show up, the deeper issue has usually been present for months.

The system was trained on data that made the world look simpler than it really is.

This happens in quiet ways. The training set may contain mostly clean examples. Rare scenarios may be missing. Environmental variation may be far narrower than production reality. The model may perform well in testing because the test environment resembles the training environment too closely.

Then deployment begins, and the visual world stops behaving politely.

What looked like an automation issue turns out to be a data environment issue. The logic downstream is doing exactly what it was told to do. The problem is that the system upstream never learned how to see the conditions it now has to interpret.

Business teams often assume more data will fix it

This is one of the most common mistakes experts see.

A team notices inconsistency and decides to collect more data. More examples, more images, more footage, more edge cases if possible. On the surface, that sounds reasonable. If the model is struggling, surely more real-world data will help.

Sometimes it does. Often it does not.

More data is only useful if it improves coverage in meaningful ways. If the pipeline keeps collecting the same dominant conditions, the system gets better at what it already knows and remains weak where it matters most. The gaps stay in place. The blind spots become harder to diagnose because the dataset is now larger and more complex, but not substantially more useful.

In business terms, teams confuse scale with reliability. They invest more effort in feeding the system without improving the structure of what the system learns from.

Real-world visual data is unreliable with business needs

The deeper issue is that real-world data is not gathered for the convenience of model training. It is produced by operations, devices, environments, and people who are not optimizing for machine learning.

That means the data arrives with all kinds of asymmetry.

Some situations occur constantly but are not very informative. Others are highly informative but rare. Some scenarios are too costly to capture at scale. Others are difficult to annotate consistently. In regulated settings, parts of the visual record may be restricted, masked, or unusable. In fast-moving environments, by the time enough data is collected, the business context may already have changed.

Automation depends on repeatable interpretation, but real-world visual inputs are not naturally organized around repeatability.

This is why teams that are otherwise strong at workflow design get stuck. They are good at process logic, integration strategy, and operational orchestration. What they lack is control over the perceptual layer, and without that control the automation stack rests on unstable ground.

Visual reliability is an infrastructure issue

Once you realize this, a lot of “mysterious” automation failures stop to looking mysterious.

If a business process depends on what a machine can detect, classify, or segment visually, then the visual layer is part of the infrastructure. It is not a side component. It is not just model training. It is a system dependency.

That means it needs the same kind of rigor organizations already apply to other parts of their stack.

They version application code. They monitor integrations. They document workflows. They manage permissions. They test release changes.

But they often do not apply comparable discipline to the visual conditions their AI systems rely on.

What scenes were represented in training? What changed between one dataset version and the next? Which variables were intentionally tested? Which failure modes were never simulated? What environmental conditions are still underrepresented?

Without answers to those questions, automation remains fragile even when everything around it looks mature.

Synthetic environments change what teams can control

This is where synthetic data becomes strategically useful.

You should not think of it as a shortcut. You must think of it as a way to move from passive data collection to active data design. Instead of waiting for the world to produce enough examples of the conditions that matter, teams can construct those conditions deliberately.

That changes the conversation.

Rather than asking, “Can we collect enough real examples of this situation?” teams can ask, “What situations does the system need to understand in order to be reliable?”

That is a much better question.

If glare affects detection, it can be modeled. If camera angles vary across environments, that variation can be introduced intentionally. If rare object interactions cause downstream automation errors, those scenarios can be created and repeated until performance becomes measurable.

This is not about replacing reality. It is about giving automation a controlled learning environment where important variables are no longer left to chance.

Why this matters for workflow reliability?

Business users do not care whether a failure came from the model or the workflow. They care that the system did not behave reliably.

That is why visual uncertainty damages trust so quickly. Once people start seeing inconsistent outcomes, they adapt in predictable ways. They add manual checks. They create fallback procedures. They use the system selectively instead of fully. Eventually the automation that was meant to reduce operational load starts creating a parallel layer of hidden labor.

At that stage, the company still says it has automation, but the day-to-day reality looks different.

What synthetic environments can offer is not just better training coverage, but better workflow confidence. If the perceptual layer is tested more intentionally, downstream processes behave more consistently. That consistency is what allows teams to remove manual oversight instead of merely shifting it around.

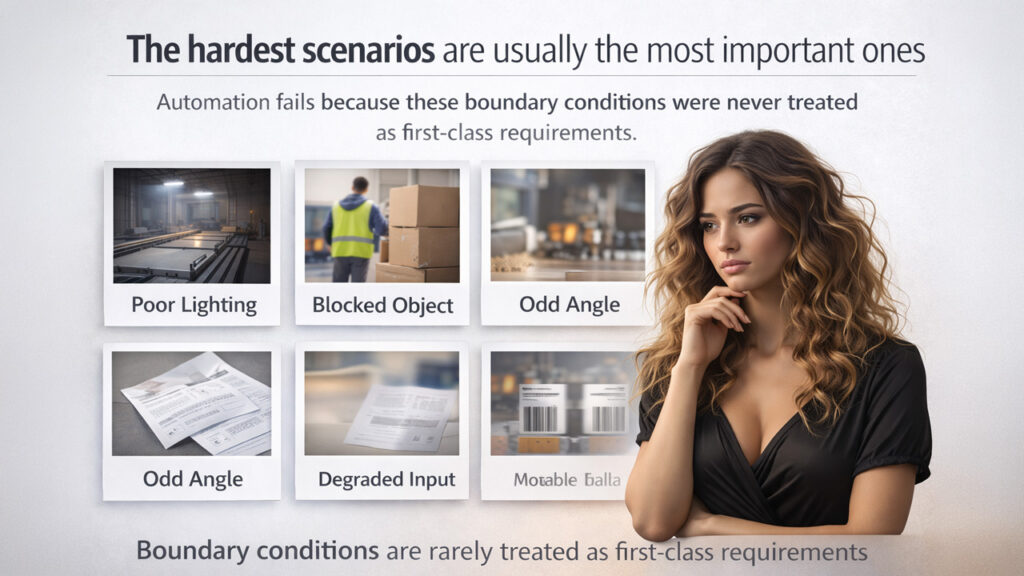

The hardest scenarios are usually the most important ones

One lesson that comes up again and again is that business risk tends to live in the margins.

Normal cases matter, but they are rarely what break trust. Trust is broken by the unusual but operationally meaningful situations that happen just often enough to matter and not often enough to dominate the training set.

A camera feed in poor lighting.

An object partially blocked by another object.

A document photographed at an odd angle.

A visual input degraded by hardware inconsistencies.

A scene that combines multiple small variations the model has never seen together.

These are exactly the kinds of conditions that real-world data pipelines struggle to represent systematically. They occur, but not on schedule. They matter, but not in convenient volumes.

Automation fails because these boundary conditions were never treated as first-class requirements.

Teams often treat perception as a model problem when it is really a systems problem

This framing matters.

When people think the issue is “the model is not good enough,” they usually respond by tuning, retraining, or swapping architectures. Sometimes that helps at the margin. Often it just extends the cycle.

When they realize the issue is systemic, the strategy improves. They start asking how the world presented to the model is being defined. They evaluate whether training data actually maps to production variability. They treat dataset design, scene construction, and validation coverage as operational responsibilities rather than research leftovers.

That shift is usually where meaningful progress begins.

It also changes who needs to be involved. Suddenly this is not just an ML conversation. Product, operations, design, QA, and infrastructure teams all have a role because the system is no longer being treated as a black box. It is being treated as a business-critical environment that needs to be engineered.

Reproducibility matters more than most teams expect

One of the biggest operational benefits of a more controlled visual data strategy is reproducibility.

When a model behaves unexpectedly, teams need to understand why. With uncontrolled real-world data, that can be surprisingly difficult. Conditions drift. Devices change. Visual contexts evolve. People remember outcomes but not always the exact circumstances that produced them.

Controlled synthetic scenarios make diagnosis easier. You can recreate the conditions. You can vary one factor at a time. You can compare performance across versions with more confidence.

That does not just help engineering. It helps the business. Problems get resolved faster, confidence returns sooner, and decisions stop depending on guesswork.

Automation becomes durable only when perception is designed in

The most important shift, in my experience, is conceptual.

A lot of automation strategy still assumes perception is something that happens before the business process begins. The machine sees something, produces an output, and the real system starts after that.

But if visual interpretation determines what the workflow believes to be true, then perception is already part of the workflow. It is upstream logic in another form. It shapes every decision that follows.

That is why “visual data is unreliable” damages automation so deeply. It does not create a small defect. It corrupts the conditions under which the rest of the system operates.

The organizations that handle this well stop treating visual data as an input they happen to receive. They treat it as an environment they must design, test, maintain, and improve.

The practical conclusion

When automation fails in visually complex environments, the instinct is often to blame tooling, model choice, or implementation of details. Sometimes those are part of the story. More often, the real issue is simpler and more structural.

The system was expected to interpret a world it was never properly prepared to see.

Once teams accept that, the path forward becomes clearer. They stop chasing reliability through patchwork fixes alone. They start building the perceptual foundation with the same seriousness they apply to workflows, integrations, and business logic.

That is the point where automation becomes more than functional. It becomes dependable. And in practice, dependability is what separates a workflow that looks impressive in a demo from one that people are actually willing to trust.

In order for automation to work, you need to have right tool, find out 7 best workflow automation tools in 2026.

%201.png)

%201.png)

%201.png)